You’ve probably seen this in a list of features in SQL 2008, but what exactly is it and how can you take advantage of it? A sparse column just means that it will not store NULL. When you have a NULLable field, NULLs are actually stored in normal columns. It doesn’t use much space, but still. So, why is this a problem? Well, if you have an index on a NULLable field, the index will have as many NULLs as the field and therefore not be particularly selectable. In some tables, like the Objects table in CMS there are MANY NULLable fields, which on most records are set to NULL.

A sparse column is stored as XML, so you only want to add this to fields that have at least 50% NULLs, since there’s an overhead for each field that has data. For example, an integer field non sparse uses 4 bytes. The same field with sparse, uses 8 bytes, but for non-NULL data. Let’s do a little experiment.

CREATE TABLE test1 (

Field1 INT,

Field2 INT SPARSE NULL,

Field3 INT SPARSE NULL

)

CREATE TABLE test2 (

Field1 INT,

Field2 INT NULL,

Field3 INT NULL

)

INSERT INTO test1

(Field1, Field2, Field3)

SELECT TOP 100000

ROW_NUMBER() OVER (ORDER BY C.id), NULL, NULL

FROM sys.syscomments AS C

CROSS JOIN sys.syscolumns

INSERT INTO test2

(Field1, Field2, Field3)

SELECT TOP 100000

ROW_NUMBER() OVER (ORDER BY C.id), NULL, NULL

FROM sys.syscomments AS C

CROSS JOIN sys.syscolumns

EXEC sp_spaceused 'test1'

EXEC sp_spaceused 'test2'

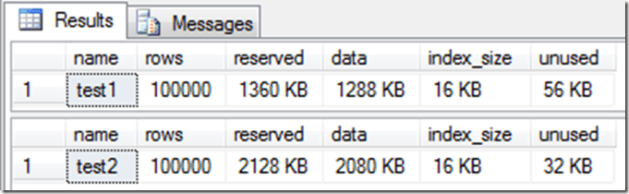

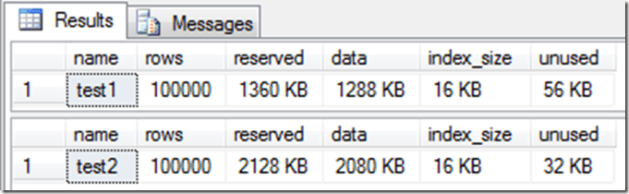

We create two tables, almost identical. The only thing different between these are that Field2 and 3 are SPARSE in test1. Then we populate both tables with 100000 records, and set NULL for Field2 and 3. This is the result I got from sp_spaceused:

This tells me that for 100.000 records, two NULLable fields will all saves me (about) 1 MB with data when using sparse. Doesn’t sound much, but if you compare it to the one without sparse it’s almost 50%. And that’s with only two NULLable fields and no indexes. In one of our customers CMS database there’s over 300.000 records in their Objects table. This table has 55 NULLable fields. Doing a SELECT * on this tables, I see that most of the NULLable fields are actually NULL more than 80% of the time. Five of them are indexed too.

So, what’s the downside of sparse columns? Well, for starters it uses the double amount of space to store data, when it’s not NULL. You can not use text, ntext, geometry and geography, you can’t have default values on them. It is also not supported by merge replication. But it’s supported by AppFrame R3, so start using it!

One thing I forgot to mention is column sets. If you add a column set to your table with sparse columns, the sparse columns will be stored in this column (remember, it’s all just XML). Keep in mind that using column sets have more restrictions. For instance, you can’t change a column set. If you have a sparse columns in your table and you want to add a column set, you need to re-create the table.

One thing that’s “cool” about column sets though is that when you select * you get the column set (with your sparse columns) as an XML:

CREATE TABLE test3 (

Field1 INT,

Field2 INT SPARSE NULL,

Field3 INT SPARSE NULL,

MySparseFields XML COLUMN_SET FOR ALL_SPARSE_COLUMNS

)

INSERT INTO test3

(Field1, Field2, Field3)

VALUES

(1, 1, 1),

(2, NULL, NULL),

(3, 3, 3),

(4, NULL, NULL)

SELECT *, Field3

FROM test3

WHERE Field2 = 1

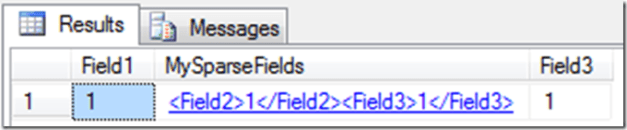

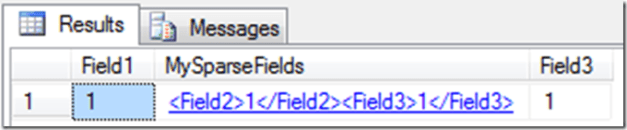

The result:

If you think this is something you should use, read more about it here:

My favorite bloggers at SQL Skills:

MSDN:

Using Sparse Columns

Using Column Sets